The Rise of Liquid Cooling: How AI Is Rewriting Data Center Design

Your facilities team just got handed a rack spec sheet for the new AI cluster. The power draw? 120 kilowatts per rack. Your current cooling infrastructure tops out at maybe 15kW per rack on a good day. Welcome to the moment every data center operator has been dreading and pretending wouldn't arrive this fast.

We've been watching this collision between AI compute demands and physical reality play out across thousands of facilities. The numbers don't lie: NVIDIA's latest GPU generations are pushing 1,200W to 2,700W per chip. Air cooling hits a practical wall around 700W per chip. The math stopped working sometime in 2024, and now everyone's scrambling to catch up.

The Physics Problem

Air cooling can't remove heat fast enough when GPU racks exceed 50kW. At 120kW per rack, you'd need industrial fans that consume more power than the servers they're trying to cool.

The infrastructure implications go far beyond just swapping out some cooling units. We're talking about replumbing entire data centers, installing coolant distribution units, implementing leak detection systems, and redesigning power and thermal management from the ground up. This isn't an upgrade path. It's a complete architectural rethink.

Every generation pushes further past the air cooling ceiling of roughly 700W per chip. The GB200 at 2,700W isn't an outlier — it's the new baseline. Facilities that can't cool these chips can't host the AI workloads that are driving the industry's revenue growth.

The Technology That's Actually Working

Direct to chip liquid cooling has emerged as the leading solution for these power densities. But it's not the only approach, and understanding the differences matters when you're making infrastructure decisions that will define your facility for the next decade.

Understanding the Impact of Data Center Liquid Cooling on GPU Performance. More critically, it drops chip operating temperatures by 20 degrees Celsius compared to air cooling, which prevents thermal throttling that kills performance when GPUs hit their thermal limits.

12% Total power reduction with direct liquid cooling (ASME validated)The technology works through Coolant Distribution Units that circulate facility water through the server cold plates. The heat gets transferred to the facility's main cooling loop, where it can be rejected through traditional chillers or economizers for free cooling in suitable climates. It sounds straightforward until you start thinking about redundancy, leak detection, and what happens when a coolant pump fails in a 120kW rack.

Direct to Chip vs Immersion Cooling

There are two primary liquid cooling approaches competing for dominance, and each has real tradeoffs that operators need to weigh carefully.

Direct to chip (DLC) uses cold plates mounted directly on processors with coolant piped to each server. It's the approach most hyperscalers are deploying because it integrates with existing server designs and doesn't require completely rethinking how you handle hardware. You can still access components for maintenance. You can still use standard server chassis. The cooling infrastructure is additive rather than replacement.

Immersion cooling submerges entire servers in dielectric fluid. It's thermally superior — the fluid contacts every heat producing component, not just the CPU and GPU. Single phase immersion keeps the fluid below boiling point and circulates it through external heat exchangers. Two phase immersion actually boils the fluid off the chips and condenses it back, achieving even higher heat transfer rates. The theoretical cooling capacity is enormous.

But immersion has practical challenges that the vendor demos don't always highlight. Hardware maintenance means pulling servers out of fluid tanks. Component compatibility is more restrictive since not everything tolerates being submerged. The dielectric fluids themselves are expensive, and some are under increasing environmental scrutiny. Facility design is fundamentally different since you're dealing with fluid filled tanks instead of traditional rack rows.

For most operators, direct to chip is winning on practicality. It delivers the cooling performance needed for current GPU generations while fitting into operational models that data center teams already understand. Immersion cooling has a future, particularly for the most extreme density deployments, but the ecosystem isn't as mature.

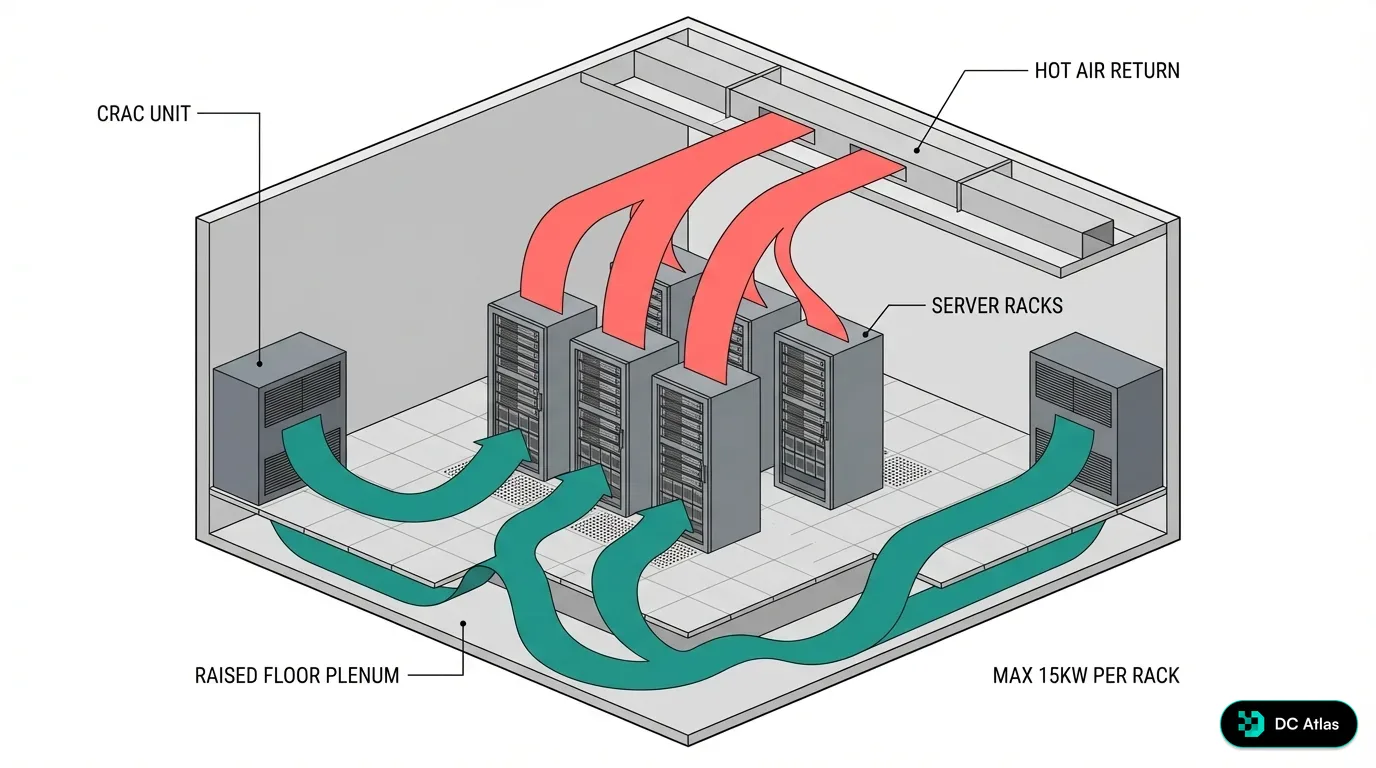

Traditional raised floor air cooling with CRAC units. Cold air flows under the floor and up through perforated tiles, hot air returns to ceiling. Maximum density: 15kW per rack with PUE around 1.6. This approach can't support modern AI GPU workloads exceeding 50kW per rack.

Technical & Economic Comparison by Cooling Type

| Dimension | Air Cooling | Liquid Cooling |

|---|---|---|

| Max rack density | 10–15 kW/rack (mainstream) | 80–120 kW/rack (DLC standard) |

| Max chip TDP | ~500–700W practical limit | 1.5–2.0 kW comfortably supported |

| Cooling energy share | 35–40% of total energy | 20–25% for high-liquid sites |

| Typical PUE | 1.4–1.7 (modern, well-run) | 1.15–1.25 mainstream DLC |

| Floor space per MW | 8,000–10,000 sqft (gross) | 3,000–5,000 sqft at 80–120 kW |

| Retrofit complexity | Low: existing CRAH/CRAC | High: piping, CDUs, leak detection |

| Revenue per sqft | 1.0× baseline (10–15 kW) | 3–5× at 80–120 kW/rack |

Who's Actually Deploying This

The hyperscalers moved first because they had to. 15-Minute Deep Dive Into the Data Center Liquid Cooling Market. They couldn't wait for the market to catch up because their AI development timelines demanded the compute capacity now.

Meta's approach is instructive. Their Grand Rapids, Michigan facility was purpose built for AI training with liquid cooling from the ground up. Rather than trying to retrofit, they designed the plumbing, power distribution, and floor layout around liquid cooled racks from day one. The result is a facility that can support rack densities most traditional data centers can't touch.

Google has taken a hybrid path, deploying liquid cooling for their TPU clusters while maintaining air cooling for less power hungry workloads. This pragmatic approach lets them target cooling investment where the thermal challenges are most acute rather than converting entire facilities.

The enterprise colocation providers are catching up fast. Equinix, Digital Realty, and CyrusOne have all announced liquid cooling ready capacity in key markets. They're seeing customer demands for 50kW to 120kW racks that simply can't be served with traditional cooling approaches. The choice is either invest in liquid cooling capability or lose the highest value customers to providers who can deliver the power densities AI workloads require.

What's particularly telling is the smaller operators. Regional providers who historically served enterprise IT are now facing demands from AI companies who need GPU clusters deployed in specific geographies for latency or data sovereignty reasons. These operators don't have the capital reserves of a hyperscaler, so they're partnering with cooling technology vendors on managed solutions that lower the upfront barrier.

The Infrastructure Reality Check

Retrofitting existing facilities for liquid cooling is where the rubber meets the road on economics. You're not just installing new cooling equipment. You're essentially replumbing parts of the facility with industrial grade piping, coolant distribution units, and monitoring systems that can detect leaks before they take down million dollar GPU clusters.

We're tracking over 30 GW of capacity under construction and nearly 10 GW in planning stages. The operators building these facilities today aren't debating whether to include liquid cooling capability. They're debating how much of the floor space to make liquid-ready versus provisioning it entirely for high-density AI workloads.

The economics work out when you factor in the full picture. Yes, liquid cooling infrastructure costs more upfront. Typically adding 15 to 30 percent to the mechanical budget of a new build, and significantly more for retrofits where structural changes are needed. But cooling typically represents 40% of total data center energy consumption. The Rise of Direct-to-Chip Cooling: Top AI Cooling System.

More importantly, liquid cooling enables rack densities that generate significantly more revenue per square foot. When you can support 120kW racks instead of 15kW racks in the same floor space, the revenue density improvement often pays for the cooling upgrade within 18 to 24 months.

The Operational Learning Curve

There's a dimension to this transition that doesn't show up in the capital expenditure models: people. Most data center operations teams have spent their careers managing air cooled environments. Liquid cooling introduces plumbing, fluid management, and failure modes that are genuinely different from anything they've dealt with before.

Leak detection becomes mission critical in a way it never was with air cooling. A coolant leak in a rack full of H100 GPUs isn't just a facilities issue, it's potentially millions of dollars in hardware damage and weeks of lost compute revenue. Operators are deploying multi-layer detection systems with moisture sensors at every connection point, under floor monitoring, and automated shutoff valves that can isolate a leak in seconds.

Coolant chemistry matters more than most people expect. The fluid needs to maintain its thermal properties over years of continuous circulation. Contamination, degradation, and microbial growth are all real concerns that require monitoring and maintenance protocols most data center teams haven't had to think about before.

The skills gap is real. We're seeing operators invest heavily in training programs, hiring from industrial process cooling backgrounds, and partnering with cooling vendors on managed service agreements that provide expert support during the transition period.

Where The Market Is Headed

The adoption timeline is accelerating faster than most industry predictions. Liquid Cooling Steps Up for High-Density Racks and AI Workloads as rack densities exceed air cooling limits universally.

The technology is moving beyond just direct to chip cooling. We're seeing hybrid systems that use liquid cooling for the highest power components while maintaining air cooling for ancillary equipment. Modular approaches that can scale liquid cooling capacity as AI workload demands increase. Smart controls that optimize coolant flow and temperature based on real time compute loads.

What we're not seeing yet is a clear standard for liquid cooling interfaces and coolants across different server vendors. The Open Compute Project and ASHRAE are both working on standardization efforts, but the pace of GPU power increases is outrunning the standards bodies. That's creating integration complexity for operators who want to support multiple hardware platforms — your NVIDIA racks might use different fittings and coolant specs than your AMD or Intel setups.

The supply chain for liquid cooling components is also tightening. Cold plate manufacturers, CDU vendors, and specialized piping contractors are all seeing demand that outstrips their current capacity. Lead times for some cooling components have stretched to 6 to 9 months, which means operators who wait to order until construction starts are already behind schedule.

The Retrofit Challenge

Legacy facilities face a choice: invest in major infrastructure upgrades or accept that they can't compete for the highest value AI workloads. There's no middle ground when physics sets the limits.

We think the liquid cooling transformation is past the tipping point. The operators who move quickly will capture the AI workload growth. The ones who wait will watch their highest revenue customers migrate to facilities that can deliver the power densities modern compute demands require.

This isn't just a cooling upgrade. It's a fundamental shift in how we design, build, and operate data center infrastructure. And if you're not planning for it now, you're already behind.

Join the waitlist to access premium features

Get access to 7,000+ data center facilities, AI-powered search, and market intelligence.

Join Waitlist